DeepSeek-V4-ProDeepSeek

|

Olmo 3Ai2

|

|||||

Related Products

|

||||||

About

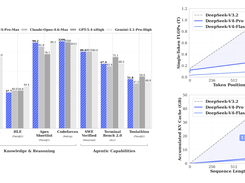

DeepSeek-V4-Pro is a large-scale Mixture-of-Experts (MoE) language model designed for advanced reasoning, coding, and long-context understanding. It features 1.6 trillion total parameters with 49 billion activated parameters, enabling high performance while maintaining efficiency. The model supports an exceptionally large context window of up to one million tokens, allowing it to process extensive documents and workflows. It uses a hybrid attention architecture to optimize long-context performance and reduce computational cost. DeepSeek-V4-Pro is trained on over 32 trillion tokens, improving its knowledge and reasoning capabilities. It also includes advanced optimization techniques for stability and faster convergence during training. The model supports multiple reasoning modes, allowing users to balance speed and accuracy based on their needs. Overall, it provides a powerful open-source solution for complex AI tasks and large-scale applications.

|

About

Olmo 3 is a fully open model family spanning 7 billion and 32 billion parameter variants that delivers not only high-performing base, reasoning, instruction, and reinforcement-learning models, but also exposure of the entire model flow, including raw training data, intermediate checkpoints, training code, long-context support (65,536 token window), and provenance tooling. Starting with the Dolma 3 dataset (≈9 trillion tokens) and its disciplined mix of web text, scientific PDFs, code, and long-form documents, the pre-training, mid-training, and long-context phases shape the base models, which are then post-trained via supervised fine-tuning, direct preference optimisation, and RL with verifiable rewards to yield the Think and Instruct variants. The 32 B Think model is described as the strongest fully open reasoning model to date, competitively close to closed-weight peers in math, code, and complex reasoning.

|

|||||

Platforms Supported

Windows

Mac

Linux

Cloud

On-Premises

iPhone

iPad

Android

Chromebook

|

Platforms Supported

Windows

Mac

Linux

Cloud

On-Premises

iPhone

iPad

Android

Chromebook

|

|||||

Audience

AI researchers, developers, and enterprises seeking a powerful open-source language model for large-scale reasoning, coding, and long-context AI applications

|

Audience

AI researchers, developers and enterprises needing a tool offering foundation models to inspect, fine-tune or deploy with full provenance and auditability

|

|||||

Support

Phone Support

24/7 Live Support

Online

|

Support

Phone Support

24/7 Live Support

Online

|

|||||

API

Offers API

|

API

Offers API

|

|||||

Screenshots and Videos |

Screenshots and Videos |

|||||

Pricing

Free

Free Version

Free Trial

|

Pricing

Free

Free Version

Free Trial

|

|||||

Reviews/

|

Reviews/

|

|||||

Training

Documentation

Webinars

Live Online

In Person

|

Training

Documentation

Webinars

Live Online

In Person

|

|||||

Company InformationDeepSeek

Founded: 2023

China

deepseek.com

|

Company InformationAi2

Founded: 2014

United States

allenai.org/blog/olmo3

|

|||||

Alternatives |

Alternatives |

|||||

|

|

|

|||||

|

|

|

|||||

|

|

|

|||||

|

|

|

|||||

Categories |

Categories |

|||||

Integrations

Buda

DeepSeek

MoClaw

OpenClaw

Together AI

ZooClaw

|

||||||

|

|

|