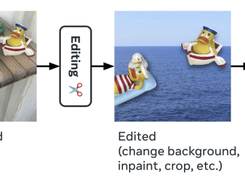

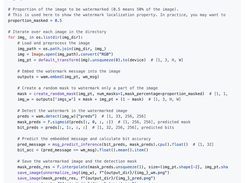

Watermark Anything (WAM) is an advanced deep learning framework for embedding and detecting localized watermarks in digital images. Developed by Facebook Research, it provides a robust, flexible system that allows users to insert one or multiple watermarks within selected image regions while maintaining visual quality and recoverability. Unlike traditional watermarking methods that rely on uniform embedding, WAM supports spatially localized watermarks, enabling targeted protection of specific image regions or objects. The model is trained to balance imperceptibility, ensuring minimal visual distortion, with robustness against transformations and edits such as cropping or motion.

Features

- Embeds localized or multiple watermarks directly into image regions

- Enables watermark detection and bit-level decoding from images

- Balances imperceptibility vs. robustness through a configurable scaling factor

- Offers pretrained models on COCO (CC-BY-NC) and SA-1B (MIT) datasets

- Implements robustness-focused training and fine-tuning pipelines

- Supports multi-watermark embedding for complex content protection scenarios

Categories

AI ModelsLicense

Creative Commons Attribution License, MIT LicenseFollow Watermark Anything

Other Useful Business Software

Gemini 3 and 200+ AI Models on One Platform

Build, govern, and optimize agents and models with Gemini Enterprise Agent Platform.

Rate This Project

Login To Rate This Project

User Reviews

Be the first to post a review of Watermark Anything!