Menu

▾

▴

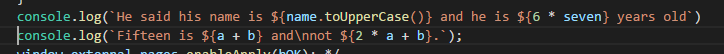

#1848 Add highlighting for Python literal string formatting (PEP 498)

closed-fixed

3

2017-02-20

2016-08-03

No

See this: https://www.python.org/dev/peps/pep-0498/

Add formatting for strings like f'hello {name}' with "name" being shown as a Python expression.

I'll leave this for someone interested. Accurately handling the syntax is difficult as it would require dealing with the different phases of string interpretation. For example the '{' may not be a literal '{' but instead '\u007b'.

I understand. For what it's worth, the fact that Python would parse '\u007b' as '{' in literal string is not a feature as much as it is a byproduct. I think that very few people are going to use this. (The PEP says "These examples aren't generally useful" which is an understatement.) So, ignoring the '\u007b' case can still produce a solution that works for 99.99% of the cases. But if it's important for you to get it absolutely right, I understand that.

Another note: I believe that the

fright before the string should be given the same syntax type as theb,uorrthat can appear before a Python string.I'm happy that you accepted it! For what it's worth, I think this one (f-strings) is more important than highlighting for function annotations.

Its just part of updating issue tracker states to be more consistent, including downgrading the priority of this issue. I'm not planning on implementing this myself.

I'm starting to work on this and a few other python lexer issues. Attached is a hg export of changes to color the f prefix, recognize nonascii unicode identifier, and a fix so @1 isn't colored as a decorator.

I'm considering using indicators to identify strings as an f-string rather than creating 4 new states for the 4 types of f strings -- an f string would have one of the 4 string states and the characters would be tagged with an indication. Is this a workable approach?

While tagging f strings with an indicator would work, client applications are less likely to understand it. Its quite likely that applications and users will want to highlight f strings distinctly and using new states will be more compatible with current approaches.

There could be an issue with mutiplying string types at some point but that doesn't mean an indicator is needed yet.

Setting indicators from lexers was intended more for cross-cutting concerns where, for example, a warning sign might be overlayed over part of a token.

The property definition appears to reference the wrong field (should be 'stringsF') and shows a b"" example instead of an f"" example.

Changed to 'stringsF' and 'f"var={var}"' and added a period to the unicode.identifiers text.

Unsure about needing control over recognizing Unicode identifiers with lexer.python.unicode.identifiers but I suppose some organisations may have style rules requiring ASCII identifiers.

Committed as change sets [7384c9], [b48472], [f6b4d0], and [e2523f].

Related

Commit: [7384c9]

Commit: [b48472]

Commit: [e2523f]

Commit: [f6b4d0]

Here's a further patch that creates more states for the f-string types and for expressions in the f-strings. I've been testing with the additions to python3.properties below. What's missing is support for nested strings like f'{"{}"}' -- what's needed is a scan backwards when a } is seen to see if it's in a string. Is anything like this done in other lexers? {} expressions are limited to one line so it would only need the current line.

Last edit: Neil Hodgson 2017-02-09

The Perl lexer has some code for complex interpolation but it isn't simple so I leave that lexer to Kein-Hong Man.

That looks worthwhile and I'd use that myself.

However, the trend in other editors and highlightied snippets on the web seems to be towards lexing expressions within interpolated strings like other expressions instead of as a single style.

Here is VSCode with some JavaScript template strings.

My initial thought was to do something like that; it's why I was asking about using indicators. I was envisioning setting the a background color for the embedded expressions, but that doesn't seem to be done in the VSCode example so maybe it's not needed. Needing 4 different states for each kind of token inside an expression seems a bit inelegant but maybe it's the way to go

Using an extra 'inside-f-string-expression' state for each primary state is quite open-ended as arbitrary sets of identifiers can now be highlighted with the sub-styles feature. There is some precedent for this as the C++ preprocessor adds an inactive state for every primary state.

If an indicator were to be used, I'd want to start adding more help for applications to recognise this so they can adapt. May require adding to the ILexer interface which is a heavy change.

It would be OK to add your lex-f-string.diff if that was going to be popular but I don't want to go down a branch that may be overtaken by something else leaving an unused feature with maintenance costs.

Is the lex functions always called with the beginning of a line as the start position? If so the lexer could just track whether it's in expression an expression because expressions are limited to one line.

Yes, lexing is always called for a range that starts at the start of a line. So local variables can be used to track state within a line.

New patch that lexes expressions within f-strings just like they were outside of the f-string. A local variable is used to track whether characters are in a f-string expression.

New version of the patch that handles eol's in f-string expressions and other cases where the string is syntactically invalid.

Committed as [89ef29].

Small changes made to formatting, loop counter scope, and using ELEMENTS() instead of hard-coding size of stack.

I expect there will be some more options over this area in the future but they can now be in response to use of this implementation.

Related

Commit: [89ef29]

Thanks. I think you committed the first f-string-no-expr.diff rather than the second though. The second one fixes problems with lines ending with f'{a<eol> and the like. Do you want a new diff against the current hg?</eol>

Yes, your diff arrived after I had pushed. I'll look at your update tomorrow.

Committed as [ebedfe] with merge from [89ef29] and minor formatting and const changes.

Related

Commit: [89ef29]

Commit: [ebedfe]