Madagascar / News: Recent posts

Hello Github!

In a major administrative change, the code repository of the Madagascar project, which has been hosted by SourceForge for more than 9 years and more than 12,000 revisions, is being moved to GitHub. The location of the Madagascar project on GitHub is https://github.com/ahay/ (www.reproducibility.org)

In a major administrative change, the code repository of the Madagascar project, which has been hosted by SourceForge for more than 9 years and more than 12,000 revisions, is being moved to GitHub. The location of the Madagascar project on GitHub is https://github.com/ahay/ (www.reproducibility.org)

The move has been discussed several times previously. The former major hub of open-source projects, SourceForge has been loosing popularity among open-source software developers and went through some bad publicity recently because of their practice of injecting malware into open-source projects. The final straw, which prompted our move, was the whole cite going down in July 2015 and taking more than a week to restore access to repositories. DHI Group, Inc. announced today its plans to sell the Slashdot Media business, which includes Slashdot and SourceForge.

GitHub brings a social-networking aspect to open-source software development, as well as many other useful tools and enhancements. Its success story was desribed in the recent article in Wired How GitHub Conquered Google, Microsoft, and Everyone Else.

The repository has been converted to Git, but if you prefer to use Subversion, you can continue to do so thanks to the svn bridge. See http://www.ahay.org/wiki/Download#Current_development_version (www.reproducibility.org) for instructions. If you need a developer access to commit changes directly to the master branch of the repository, please register at GitHub and send your GitHub login name to the project administrator. Everyone else should be able to participate in the project development by using Git's preferred way of "pull requests".

The repository has been converted to Git, but if you prefer to use Subversion, you can continue to do so thanks to the svn bridge. See http://www.ahay.org/wiki/Download#Current_development_version (www.reproducibility.org) for instructions. If you need a developer access to commit changes directly to the master branch of the repository, please register at GitHub and send your GitHub login name to the project administrator. Everyone else should be able to participate in the project development by using Git's preferred way of "pull requests".

... read more

More colormaps

The most popular colormap in Madagascar, other than the default greyscale, is color=j, modeled after "jet", which used to be the default colormap in MATLAB. More than 1,000 Madagascar examples use color=j.

In October 2014, with release R2014b (Version 8.4), MATLAB switched the default colormap to a different one, called "parula". The "parula" colormap is copyrighted by MathWorks as a result of a creative process (solving an optimization problem). No open-source license is given to use it outside of MATLAB. According to Steve Eddins, "this colormap is MathWorks intellectual property, and it would not be appropriate or acceptable to copy or re-use it in non-MathWorks plotting tools."

Stéfan van der Walt and Nathaniel Smith from the Berkeley Institute for Data Science have developed several new open-source colormaps with good perceptual properties. One of them (named "viridis") is proposed as a good replacement for "jet" and as the default colormap in matplotlib 2.0.

Is it a good colormap? We can find out by using tools from Matteo Niccoli's tutorial on colormaps.

This analysis shows the intensity and lightness distributions of "viridis" are nicely linear. In his presentation at SciPy-2015, Nathaniel Smith explains the rational for this choice.

... read more

Tutorial on seismic petrophysics, Part 1

The example in rsf/tutorials/petro1 reproduces the tutorial from Alessandro Amato del Monte on seismic petrophysics (Part 1). The tutorial was published in the April 2015 issue of The Leading Edge.

Madagascar users are encouraged to try improving the results.

... read more

Tutorial on well-tie calculus

The example in rsf/tutorials/well-tie reproduces the tutorial from Evan Bianco on well-tie calculus. The tutorial was published in the June 2014 issue of The Leading Edge.

Madagascar users are encouraged to try improving the results.

... read more

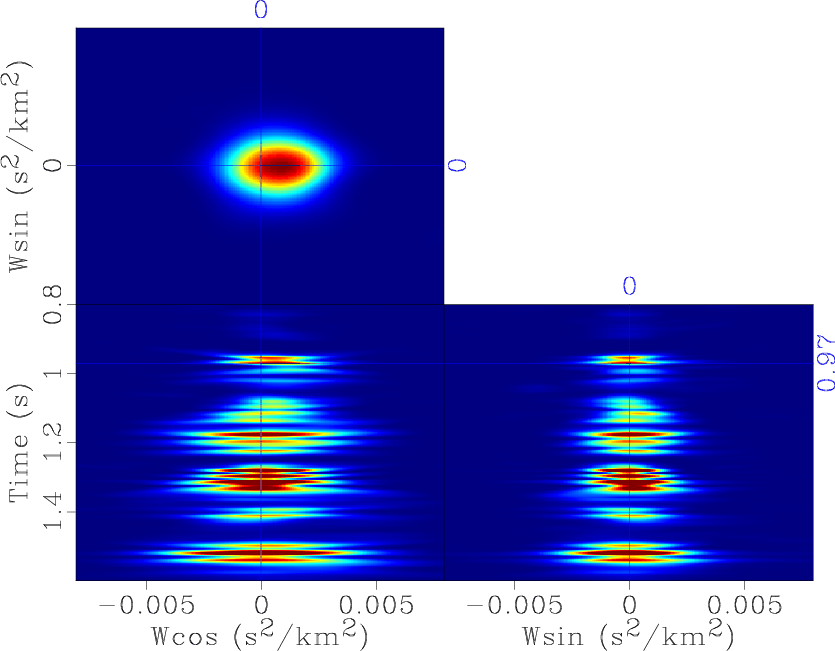

Similarity-weighted semblance

A new paper is added to the collection of reproducible documents:

Velocity analysis using similarity-weighted semblance

Weighted semblance can be used for improving the performance of the traditional semblance for specific datasets. We propose a novel approach for prestack velocity analysis using weighted semblance. The novelty comes from a different weighting criteria in which the local similarity between each trace and a reference trace is used. On one hand, low similarity corresponds to a noise point or a point indicating incorrect moveout, which should be given a small weight. On the other hand, high similarity corresponds to a point indicating correct moveout, which should be given a high weight. The proposed approach can also be effectively used for analyzing AVO anomalies with increased resolution compared with AB semblance. Both synthetic and field CMP gathers demonstrate higher resolution using the proposed approach. Applications of the proposed method on a prestack dataset further confirms that the stacked data using the similarity-weighted semblance can obtain better energy-focused events, which indicates a more precise velocity picking.

link

Test case for PEF estimation

Another old paper is added to the collection of reproducible documents:

Test case for PEF estimation with sparse data II

The two-stage missing data interpolation approach of Claerbout (1998) (henceforth, the GEE approach) has been applied with great success (Fomel et al., 1997 (www.reproducibility.org); Clapp et al., 1998 (www.reproducibility.org); Crawley, 2000) in the past. The main strength of the approach lies in the ability of the prediction error filter (PEF) to find multiple, hidden correlation in the known data, and then, via regularization, to impose the same correlation (covariance) onto the unknown model. Unfortunately, the GEE approach may break down in the face of very sparsely-distributed data, as the number of valid regression equations in the PEF estimation step may drop to zero. In this case, the most common approach is to simply retreat to regularizing with an isotropic differential filter (e.g., Laplacian), which leads to a minimum-energy solution and implicitly assumes an isotropic model covariance.

A pressing goal of many SEP researchers is to find a way of estimating a PEF from sparse data. Although new ideas are certainly required to solve this interesting problem, Claerbout (2000) proposes that a standard, simple test case first be constructed, and suggests using a known model with vanishing Gaussian curvature. In this paper, we present the following, simpler test case, which we feel makes for a better first step.

- Model: Deconvolve a 2-D field of random numbers with a simple dip filter, leading to a ``plane-wave'' model.

- Filter: The ideal interpolation filter is simply the dip filter used to create the model.

- Data: Subsample the known model randomly and so sparsely as to make conventional PEF estimation impossible.

We use the aforementioned dip filter to regularize a least squares estimation of the missing model points and show that this filter is ideal, in the sense that the model residual is relatively small. Interestingly, we found that the characteristics of the true model and interpolation result depended strongly on the accuracy (dip spectrum localization) of the dip filter. We chose the 8-point truncated sinc filter presented by Fomel (2000). We discuss briefly the motivation for this choice.

link

Reproducible research and PDF files

Claerbout's principle of reproducible research, as formulated by Buckheit and Donoho (1995), states:

An article about computational science in a scientific publication is not the scholarship itself, it is merely advertising of the scholarship. The actual scholarship is the complete software development environment and the complete set of instructions which generated the figures.

The geophysics class in the SEGTeX package features a new option: reproduce, which attaches SConstruct files or other appropriate code (Matlab scripts, Python scripts, etc.) directly to the PDF file of the paper, with a button under every reproducible figure for opening the corresponding script.

Unfortunately, not every PDF viewer supports this kind of links. The screenshot below shows evince viewer on Linux, where clicking the button opens the file with gedit editor.

Double-elliptic approximation in TI media

Another old paper is added to the collection of reproducible documents:

The double-elliptic approximation in the group and phase domains

Elliptical anisotropy has found wide use as a simple approximation to transverse isotropy because of a unique symmetry property (an elliptical dispersion relation corresponds to an elliptical impulse response) and a simple relationship to standard geophysical techniques (hyperbolic moveout corresponds to elliptical wavefronts; NMO measures horizontal velocity, and time-to-depth conversion depends on vertical velocity). However, elliptical anisotropy is only useful as an approximation in certain restricted cases, such as when the underlying true anisotropy does not depart too far from ellipticity or the observed angular aperture is small. This limitation is fundamental, because there are only two parameters needed to define an ellipse: the horizontal and vertical velocities. (Sometimes the orientation of the principle axes is also included as a free parameter, but usually not.)

In a previous SEP report Muir (1990) showed how to extend the standard elliptical approximation to a so-called double-elliptic form. (The relation between the elastic constants of a TI medium and the coefficients of the corresponding double-elliptic approximation is developed in a companion paper, (Muir, 1991).) The aim of this new approximation is to preserve the useful properties of elliptical anisotropy while doubling the number of free parameters, thus allowing a much wider range of transversely isotropic media to be adequately fit. At first glance this goal seems unattainable: elliptical anisotropy is the most complex form of anisotropy possible with a simple analytical form in both the dispersion relation and impulse response domains. Muir's approximation is useful because it nearly satisfies both incompatible goals at once: it has a simple relationship to NMO and true vertical and horizontal velocity, and to a good approximation it has the same simple analytical form in both domains of interest.

The purpose of this short note is to test by example how well the double-elliptic approximation comes to meeting these goals:

- Simple relationships to NMO and true velocities on principle axes.

- Simple analytical form for both the dispersion relation and impulse response.

- Approximates general transversely isotropic media well.

The results indicate that the method should work well in practice.

link

Literate programming with IPython notebooks

Literate programming is a concept promoted by Donald Knuth, the famous computer scientist (and the author of the Art of Computer Programming.) According to this concept, computer programs should be written in a combination of the programming language (the usual source code) and the natural language, which explains the logic of the program.... read more

1M

Madagascar passes a symbolic mark of one million lines of code. Black Duck Open Hub reports that

In a Nutshell, Madagascar...

has had 12,444 commits made by 85 contributors

representing 1,098,223 lines of code

Amplitude balancing

Another old paper is added to the collection of reproducible documents:

Iterative least-square inversion for amplitude balancing

Variations in source strength and receiver amplitude can introduce a bias in the final AVO analysis of prestack seismic reflection data. In this paper we tackle the problem of the amplitude balancing of the seismic traces from a marine survey. We start with a 2-D energy map from which the global trend has been removed. In order to balance this amplitude map, we first invert for the correction coefficients using an iterative least-square algorithm. The coefficients are calculated for each shot position along the survey line, each receiver position in the recording cable, and each offset. Using these coefficients, we then correct the original amplitude map for amplitude variations in the shot, receiver, and offset directions.

link

Two-dimensional Hilbert transform

A new paper is added to the collection of reproducible documents:

... read more

Structure-constrained acoustic impedance

A new paper is added to the collection of reproducible documents:

Structure-constrained relative acoustic impedance using stratigraphic coordinates

Acoustic impedance inversion involves conversion of seismic traces to a reflection coefficient time series, and then into acoustic impedance. The usual assumption for the transformation of post-stack seismic data into impedance , is that seismic traces can be modeled using the simple convolutional model. According to the convolutional model, a seismic trace is a normal-incidence record, which is an assumption that is strictly true only if the earth structure is composed of horizontal layers. In the presence of dipping layers, such an assumption is violated, which introduces bias in the result of impedance inversion. I propose to implement impedance inversion in the stratigraphic coordinate system, where the vertical direction is normal to reflectors and seismic traces represent normal-incidence seismograms. Tests on field data produce more accurate and detailed impedance results from inversion in the stratigraphic coordinate system, compared to impedance results using the conventional Cartesian coordinate system.

link

Summer events

Two exciting events are being planned for this summer.

The Madagascar School for Advanced Users is being planned for August 8-9, 2015. The school will take place in Qingdao, China, and will be hosted by the China University of Petroleum. Unlike previous Madagascar schools, the intended audience are not beginners but current users of Madagascar who want to learn more about advanced topics (parallel computing; graphical user interfaces; interfaces to C++, Python, and Matlab, etc.) More information is available at http://www.ahay.org/wiki/Qingdao_2015 (www.reproducibility.org).

The Working Workshop on 3D Seismic Data Processing is being planned for August 19-22, 2015. The workshop will take place in Houston and will be hosted by Rice University. Unlike previous working workshops, the participations is not limited to Madagascar users. Users of other software packages with interest in seismic field data processing are encouraged to participate. The workshop is being organized by Karl Schleicher, with support of SEG. More information is available at http://www.ahay.org/wiki/SEG_3D_Seismic_Processing_Working_Workshop_Houston_2015-_Land_3D (www.reproducibility.org)

link

Tutorial on seismic survey design

The example in rsf/tutorials/survey reproduces the tutorial from Evan Bianco on designing 3D seismic surveys. For more explanation, see Evian's blog post Laying out a seismic survey.

Madagascar users are encouraged to try improving the results. ... read more

Reproducible research in psychology

An article A Perfect Storm: The Record of a Revolution by Eric-Jan Wagenmakers, a mathematical psychologist from the Unversity of Amsterdam, describes a reproducibility revolution, which is taking place in psychology: ... read more

An article A Perfect Storm: The Record of a Revolution by Eric-Jan Wagenmakers, a mathematical psychologist from the Unversity of Amsterdam, describes a reproducibility revolution, which is taking place in psychology: ... read more

Program of the month: sfslant

sfslant is a T-X implementation of slant stack, also known as Radon transform or tau-p transform.

The two middle panels in the example below from cwp/geo2006TimeShiftImagingCondition/zicig show a time-shift common-image gather and its transformation by slant stack.

sfslant is a linear operator and has an adjoint flag adj=: When adj=n, the transformation is from tau-p to t-x. When adj=y, the transformation is from t-x to tau-p. The sampling on the transformed coordinate is controlled in the two cases by nx=, dx=, x0= or, respectively np=, dp=, p0=.

Antialiasing is enabled by default with anti=1 parameter. The central slope for antialiasing is given by p1=.

A space integration is sfslant generally requires a corrective "rho filter" (half-order differentiation). It is enabled by rho= parameter.

For an F-X implementation of the Radon transform, see sfradon.

... read more

Seislet-based MCA

A new paper is added to the collection of reproducible documents:

Seislet-based morphological component analysis using scale-dependent exponential shrinkage

Morphological component analysis (MCA) is a powerful tool used in image processing to separate different geometrical components (cartoons and textures, curves and points etc). MCA is based on the observation that many complex signals may not be sparsely represented using only one dictionary/transform, however can have sparse representation by combining several over-complete dictionaries/transforms. In this paper we propose seislet-based MCA for seismic data processing. MCA algorithm is reformulated in the shaping-regularization framework. Successful seislet-based MCA depends on reliable slope estimation of seismic events, which is done by plane-wave destruction (PWD) filters. An exponential shrinkage operator unifies many existing thresholding operators and is adopted in scale-dependent shaping regularization to promote sparsity. Numerical examples demonstrate a superior performance of the proposed exponential shrinkage operator and the potential of seislet-based MCA in application to trace interpolation and multiple removal.

link

Tutorial on image resolution

The example in rsf/tutorials/images reproduces the tutorial from Matt Hall on playing with image resolution. For more explanation, see Matt's blog post R is for Resolution.

Madagascar users are encouraged to try improving the results. ... read more

madagascar-1.7 released

The 1.7 stable release features 21 new reproducible papers and multiple other enhancements including improved tools for parallel computing developed at the Second Working Workshop.

According to the SourceForge statistics, the previous 1.5 stable distribution has been downloaded more than 4,000 times. The top country (with 24% of all downloads) was USA, followed by China, Colombia, Germany, and Brazil.

According to Openhub.net (www.reproducibility.org) (last updated in January 2015), the year of 2014 was a period of a high development activity, with 33 contributors making 1,876 commits to the repository (up 16% from the previous year). Openhub.net says that Madagascar "has a well established, mature codebase maintained by a very large development team with stable year-over-year commits " and estimated 239 man-years of effort (an estimated development cost of $13 million).

How to make your research irreproducible

Yesterday (April 1, 2015) a group of computer scientists from UK (Neil Chue Hong, Tom Crick, Ian Gent, and Lars Kotthoff) announced a seminal paper Top Tips to Make Your Research Irreproducible.

... read more

... read more

Fast 3D velocity scan

A new paper is added to the collection of reproducible documents:

A fast algorithm for 3D azimuthally anisotropic velocity scan

Conventional velocity scan can be computationally expensive for large-size seismic data, particularly when the presence of anisotropy requires multiparameter estimation. We introduce a fast algorithm for 3D azimuthally anisotropic velocity scan, which is a generalization of the previously proposed 2D butterfly algorithm for hyperbolic Radon transform. To compute the semblance in a two-parameter residual moveout domain, the numerical complexity of our algorithm is roughly

as opposed to

of the straightforward velocity scan, with

being representative of the number of points in either dimension of data space or parameter space. We provide both synthetic and field-data examples to illustrate the efficiency and accuracy of the algorithm.

link

Multiple suppression using PEF

Another old paper is added to the collection of reproducible documents:

Multiple suppression using prediction-error filter

I present an approach to multiple suppression, that is based on the moveout between primary and multiple events in the CMP gather. After normal moveout correction, primary events will be horizontal, whereas multiple events will not be. For each NMOed CMP gather, I reorder the offset in random order. Ideally, this process has little influence on the primaries, but it destroys the shape of the multiples. In other words, after randomization of the offset order, the multiples appear as random noise. This "man-made" random noise can be removed using prediction-error filter (PEF). The randomization of the offset order can be regarded as a random process, so we can apply it to the CMP gather many times and get many different samples. All the samples can be arranged into a 3-D cube, which is further divided into many small subcubes. A 3-D PEF can then be estimated from each subcube and re-applied to it to remove the multiple energy. After that, all the samples are averaged back into one CMP gather, which is supposed to be free of multiple events. In order to improve the efficiency of the algorithm of estimating the PEF for each subcube, except for the first subcube which starts with a zero-valued initial guess, all the subsequent subcubes take the last estimated PEF as an initial guess. Therefore, the iteration count can be reduced to one step for all the subsequent subcubes with little loss of accuracy. Three examples demonstrate the performance of this new approach, especially in removing the near-offset multiples.

link

FWI on GPU

A new paper is added to the collection of reproducible documents:

A graphics processing unit implementation of time-domain full-waveform inversion

The graphics processing unit (GPU) has become a popular device for seismic imaging and inversion due to its superior speedup performance. In this paper we implement GPU-based full waveform inversion (FWI) using the wavefield reconstruction strategy. Because the computation on GPU is much faster than CPU-GPU data communication, in our implementation the boundaries of the forward modeling are saved on the device to avert the issue of data transfer between host and device. The Clayton-Enquist absorbing boundary is adopted to maintain the efficiency of GPU computation. A hybrid nonlinear conjugate gradient algorithm combined with the parallel reduction scheme is utilized to do computation in GPU blocks. The numerical results confirm the validity of our implementation.

link

Tutorial on phase and the Hilbert transform

The example in rsf/tutorials/hilbert reproduces the tutorial from Steve Purves on phase and the Hilbert transform. The tutorial was published in the October 2014 issue of The Leading Edge.

Madagascar users are encouraged to try improving the results.

See also: ... read more